Active inference

The ideas expressed on this page depend on all previous content in the Knowledge Center.

Active inference is a model of human and animal behavior developed by the neuroscientist Karl Friston and his collaborators. In active inference, we treat actions as variable in our model that can be inferred using Bayesian inference just like any other variable. The models used by active inference agents deviate from other kinds of models in Genius in the following important ways:

Active inference agents use models that include the notion of time which makes them dynamic models instead of static.

Active inference agents use models that enable them to decide between courses of action to achieve a particular goal.

Active inference agents use models that assume that the true state of a process being modeled is an unobserved or latent variable.

Importantly, active inference agents can go beyond simply deciding courses of actions and actually execute them, provided that they have a physical means to interact with the environment. This is very important because it means that active inference agents are adaptable and learn about the external environment through interaction. An active inference agent continuously modifies and updates its model of the external environment through feedback with the real world.

Furthermore, active inference agents can achieve their goals without explicit instruction, decision-making rules, or heuristics. Given a particular goal, the agent will explore its environment to determine the optimal way to achieve this goal and, when it is confident, it will pursue the goal. If it does not yet know enough about the environment because it is novel, it will learn how it functions first.

The external environment

In order for an active inference agent to decide on courses of action and, optionally, execute these actions, there must be some physical process external to the agent, an environment, for it to act on. Examples of environments include:

A warehouse in which an agent must navigate to a particular location to drop off an item

A business inventory for a grocery store that must be stocked in a particular way depending on consumer demands

The environment could also be simulated as demonstrated in the agent navigation and multi-armed bandit examples.

We assume that the external environment exists at a particular state at a given moment in time, and we also assume that this state evolves or changes over time. This process, known as a state-transition, is assumed to be unknown to the agent which is realistic for many real world problems. When the environment transitions to a new state it generates some kind of observation (data) that can be used by the agent in active inference. The agent can then use its model to infer the state that caused the data it observed.

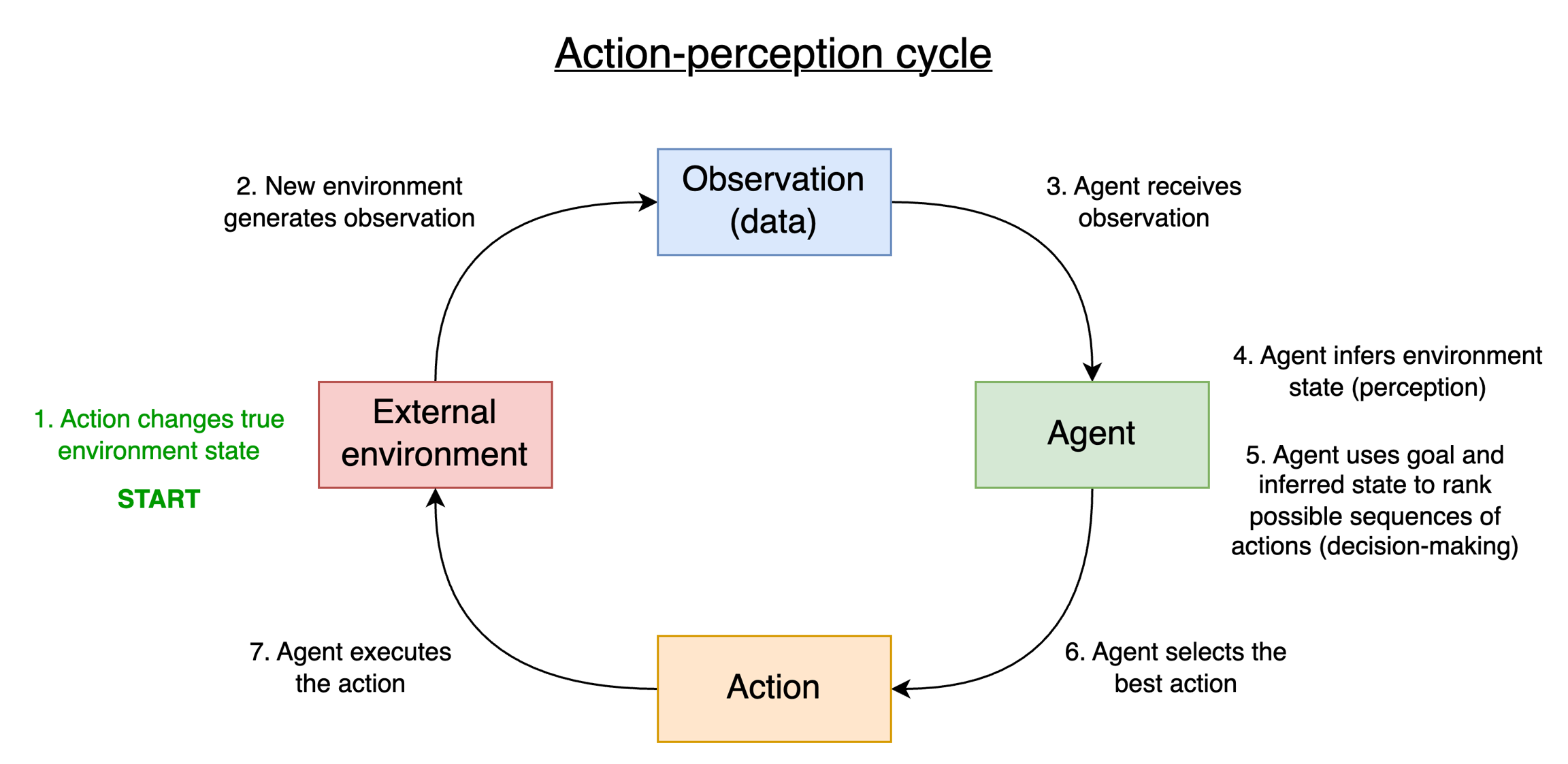

The steps of active inference

As we saw in the previous tutorial, the agent relies upon a special kind of probabilistic model called a partially observable Markov decision process (POMDP). Using this model, the agent performs the following steps:

Observation - Receive observation from the environment

Perception - Determine the state of the environment that generated that observation

Decision-making - Based on a goal and the current state of the environment, decide possible courses of action and rank them

Action selection - Select the best action

Action execution - Optionally, execute the action if the agent has a way to interact with the environment

Learning - Update the probabilities in the model

We briefly investigate each of these steps below.

Observation and perception

First, the external environment we are modeling transitions to a new state at the next moment in time. When the environment is in this new state, a new observation is generated. The agent then receives this data ("observation"). For example, if the environment transitioned from the state "raining" to "not raining" then the agent would receive the observation or evidence of the ground being "wet" or "not wet".

With this data in hand, the agent uses Bayesian inference to determine its belief about the state that generated the observation ("perception"). This Bayesian inference process is necessary because of our assumption that the agent does not have access or knowledge to the true state of the external environment ("raining" or "not raining"). It only has access to the observation that is generated as a consequence of the environment being in that state ("wet" or "not wet").

The agent also relies on its initial state prior to influence what state is likely in addition to taking the observation into account. After all, there is no guarantee that the evidence or observation it is just receiving is of good quality since we are operating under conditions of uncertainty.

Note in practice, the agent uses an approximate form of Bayesian inference known as variational inference. Variational inference minimizes an objective function known as variational free energy to approximate the parameters of the distribution over the variable being inferred. Since Bayesian inference can be computationally expensive, variational free energy minimization provides an approximate solution to determining a probability distribution given some observation which is much more efficient and cheaper.

Decision-making, action-selection, and execution

Once the agent has determined the state of the environment, it can now decide what course of action to take. This is accomplished using its preferences, which encode what kind of observation it would like to obtain. This point is somewhat subtle and can be reasoned through in a series of steps:

The agent considers a list of possible actions it could take.

For each action in the list, the agent infers what state the environment would be in if that action were taken (future state inference).

For each state under consideration, the agent uses its likelihood to determine what observation would be generated (future data conditioning).

The agent compares this observation to its preferences and sees if it matches. If yes, then it is ranked higher than other possible actions.

As we can see, the decision-making process is about ranking whether or not each chosen action would produce observations consistent with the observation the agent would like to see from the environment.

However, active inference agents do not only take actions to pursue their goals. Active inference agents are also curious and may choose actions that enable the exploration of the environment. They may do this because:

Curious agents can better adapt to goals in a changing and uncertain environment

Curious agents can gather information about their environment to more confidently and reliably attain their goals

Curious agents can become very efficient by learning what is important and relevant about their environment

Active inference agents thus automatically balance two competing criteria: reward-seeking and information-seeking and ranking actions by which criteria are more important at a given time. Sometimes, it may be more important to explore for more information, while at other times it may be important to pursue a reward. Active inference agents do so without explicit specification from the modeler and do not require a customized model for every scenario at hand. This fact underscores the power and generalizability of active inference models.

In practice, balancing reward-seeking and information-seeking and ranking actions is accomplished with a quasi-objective function known as expected free energy. Expected free energy is so named because it applies to future states of the environment for which observations have not yet been observed by the active inference agent.

Once the agent has ranked the possible actions, or sequences of actions, it can then select the optimal one. Currently, the agent will select the highest ranking action/sequence of actions, though other action-selection strategies are possible.

Finally, the agent can optionally execute the action. Whether or not it does so depends on the application of the model. For some applications, one may desire to use the agent to simply alert a human user about the ranked courses of action/sequences so that these ranked actions/sequences are used to inform a broader decision-making process. In other cases, the active inference agent may have physical access to the real world (such as a robot) or a way to interact with some software system such that it can directly affect the process.

Learning

In the section about parameter learning we saw that agents could learn the probabilities for each factor in the factor graph from the observations. In a dynamic scenario involving time, these probabilities may be subject to change because the real world process being modeled may change. To account for this, active inference agents perform online parameter learning. That is, each observation received by the environment may be used to update factor probabilities as needed so that the agent's model always stays consistent with the real world and performs accurate inferences (provided the observation it is receiving is of sufficient quality).

In practice, learning is itself a process of expected free energy minimization. Agents may at times choose to spend multiple actions optimizing parameters before they pursue their goals. Like all other processes in active inference, this is achieved without the need for ad hoc rules and specific models.

Last updated