Probabilistic inference

The probabilistic model that we created and visualized as a factor graph in earlier tutorials can be queried to understand how probabilities change in response to some given data. In this tutorial we investigate how to perform probabilistic inference by conditioning and Bayesian inference.

In causal models, probabilistic inference can be seen as a form of "forward causation" (causes to effects) while Bayesian inference can be see as a form of "inverse causation" (effects to causes). Bayesian networks are not inherently "causal" and instead specify dependencies, though these dependencies might capture causal effects from the real world. True causal networks require specific methodology. See (Gelman 2011) for more detail.

Inference by conditioning entails querying the model to answer "forward inference" questions. That is, we might want to know how the probability of one variable is affected by the state of a variable or variables on which it depends.

Returning to the sprinkler example, we may want to know the probability of grass being wet if we observed that a sprinkler is on and that it is raining. Bayesian inference allows us to take in this data, evidence, and infer the probability distribution over the grass being wet or not wet.

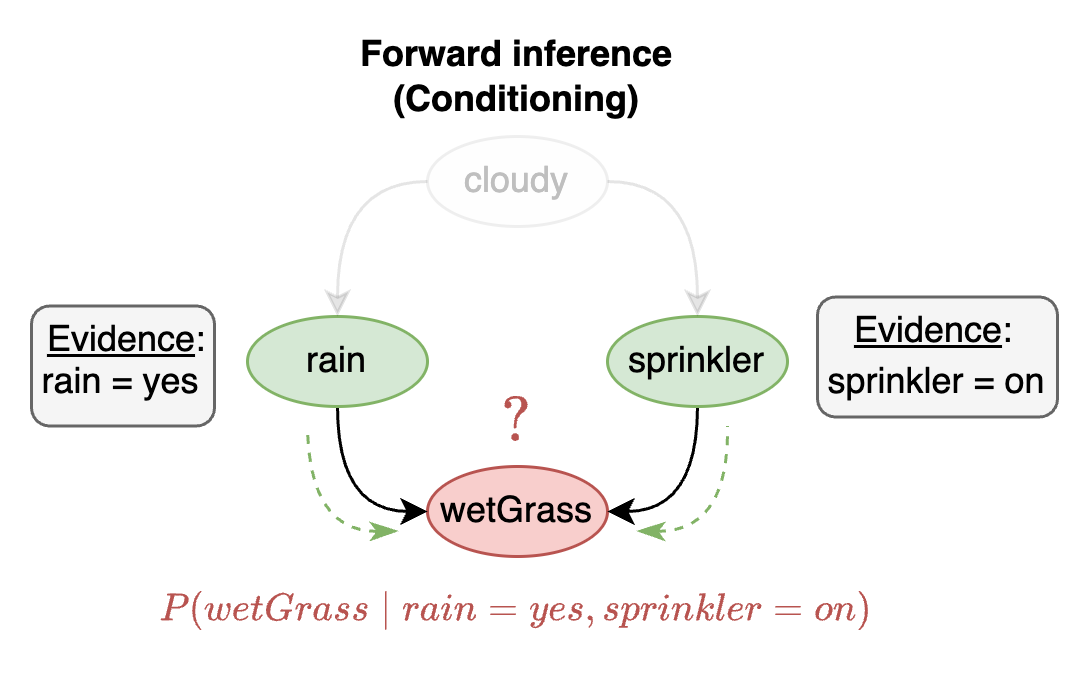

Mathematically, this is like asking P(wetGrass∣Rain=yes,Sprinkler=on). In words: "What is the probability of the grass being wet given that it is raining and the sprinkler is on?". Visually, this process of inference is depicted below:

sprinkler exampleThis visual shows that wetGrass is the variable whose probability distribution we wish to infer (red). The evidence we have is that rain=yes and sprinkler=on. The dotted green arrows show this evidence pointing to the variable being inferred. Notice that the evidence is pointing along the direction of the dependency lines (black). This is what we mean by forward inference or conditioning. In other words, forward inference questions tell you what happens to the probability distribution of a variable if we observe data about the variables that they depend on.

Bayesian inference

Bayesian Inference entails querying the model to answer "reverse inference" questions. Instead of following the chain of reasoning of a variable in the forward direction we reverse the relationship and ask a question in the other direction.

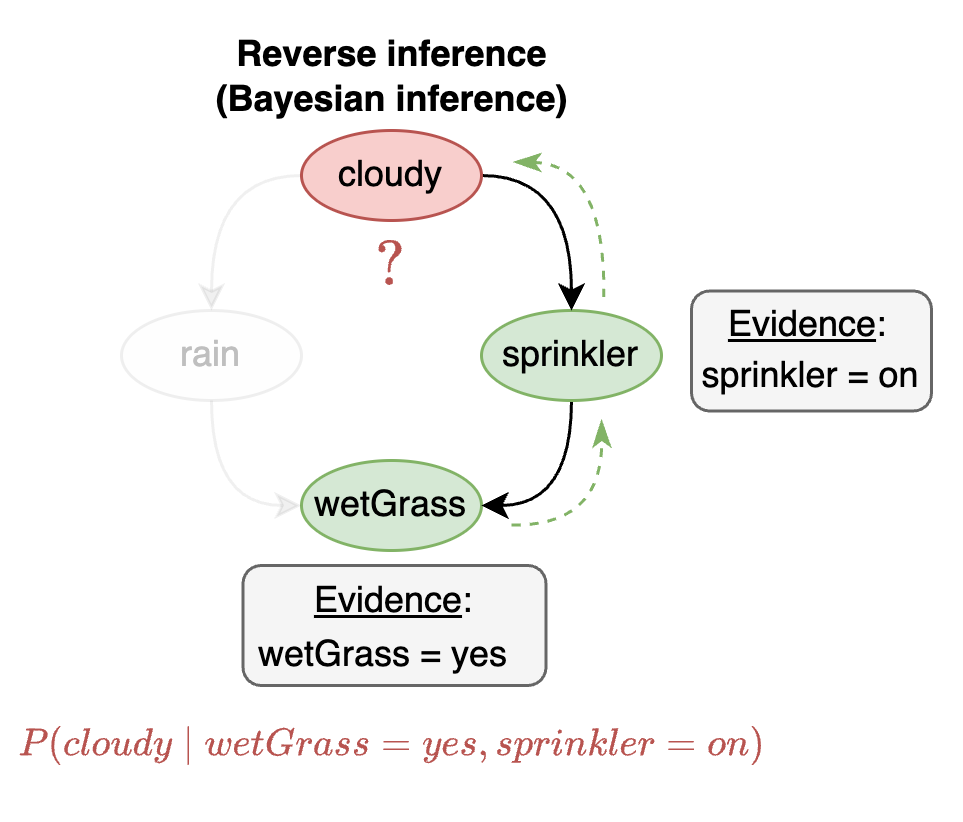

For example, in the sprinkler example, we could ask "what is the probability of being cloudy given that we observe that the grass is wet and the sprinkler is on?" Or, "what is the probability of being cloudy given that we observe that the grass is wet and it is raining?" In probabilistic notation, these two cases correspond to

P(cloudy∣wetGrass=yes,Sprinkler=on)

P(cloudy∣wetGrass=yes,Rain=yes)

The visualization below shows Bayesian inference for the first bullet point:

This visual shows that cloudy is the variable whose probability distribution we wish to infer (red). The evidence we have is that wetGrass=yes and sprinkler=on. The dotted green arrows show this evidence pointing to the variable being inferred. Notice that the evidence is pointing against the direction of the dependency lines (black). This is what we mean by reverse inference or Bayesian inference.

Solving reverse inference questions relies on the application of Bayes' theorem. Bayesian inference is important because we often want to ask reverse inference questions about the real world process we are modeling.

Latent variable inference

Sometimes there may be variables in our probabilistic model for which we have no data. These are called unobserved, hidden, or latent variables. Such variables are unobserved in the sense that there are no observations from the real world available for them.

For example, suppose that we are in a medical diagnosis setting where we wish to know if a patient has a bacterial or viral infection. Although we may not be able to measure this variable directly without further tests, we know that it is directly tied to other variables we can observe such as the presence or absence of a cough, fatigue, or fever. These three variables can thus be used to infer if a bacterial or viral infection is more likely.

However, if there are no data available to us then how can we estimate the probability of an event? The answer to this question lies in the fact that often variables that we can observe are indirectly related to some other variables we may be interested in that we cannot observe. Once we represent these unobserved latent variables in our model we can use existing data we have access to to infer the probabilities over these latent variables.

In Genius, latent variables can easily be added to your model. Once added, their probabilities will automatically be calculated using existing data. For more information see the model editor overview or the Python SDK overview page.

Technically, latent variable inference is still a form of Bayesian inference. The difference is that we are inferring the probability over an unobserved variable given some data rather than an observed variable.

Last updated