Partially observable Markov decision processes (POMDP)

An active inference agent uses a special kind of probabilistic model known as a partially observable Markov decision process (POMDP). This model relies upon the following assumptions:

The system being modeled is partially observable in the sense that some variables (states) are unobserved. A state is some description of a configuration of a system of interest which is assumed to evolve or change over time.

The agent has the ability to make decisions about courses of action and execute them to modify or affect the environment.

The model assumes that the past state of the environment has all the information that agent needs to decide on a course of action (the Markov property).

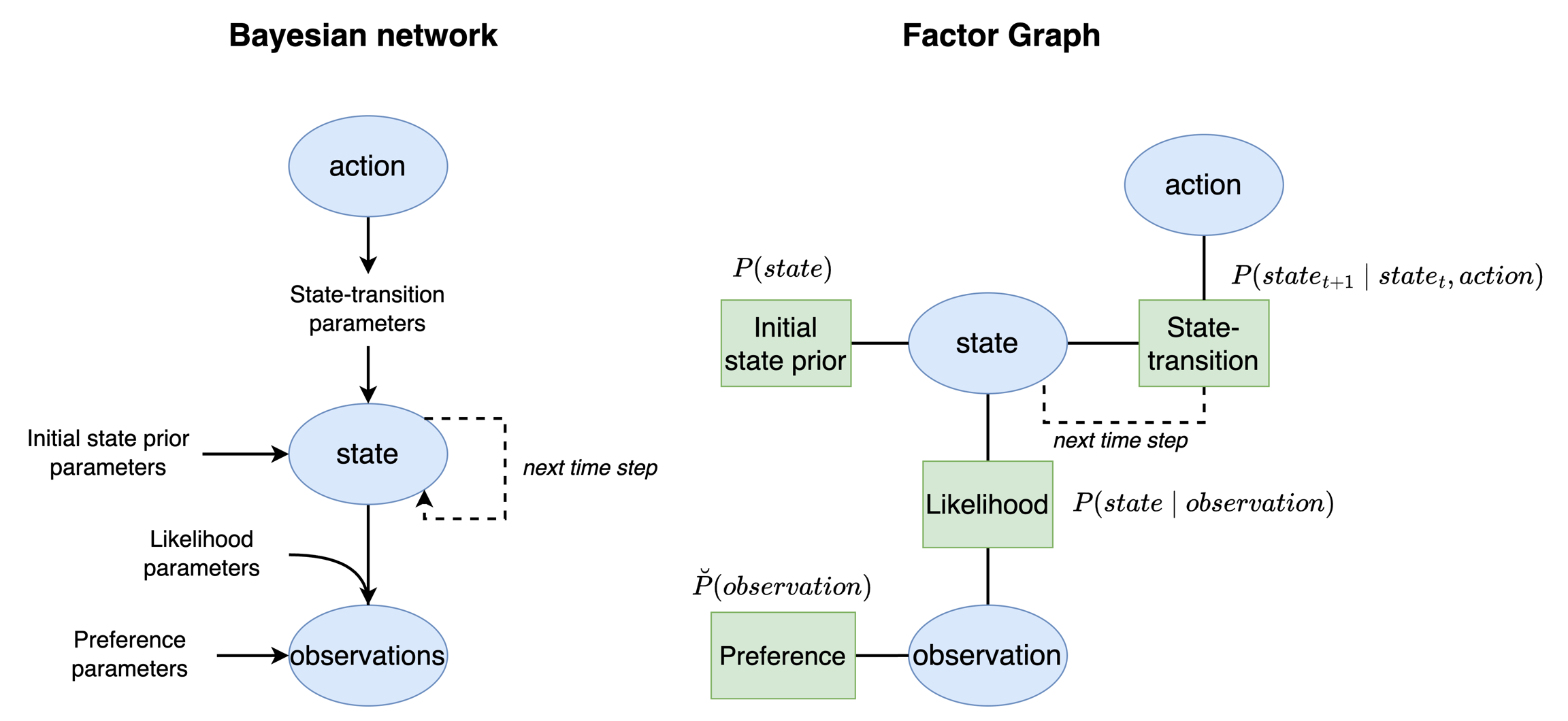

Below is a diagram that depicts an active inference agent's POMDP model as a Bayesian network (left) and as a factor graph (right).

This model is the agent's representation of the structure of the real world process it is modeling. Although the model looks complicated, the interpretation is very intuitive, especially in the factor graph representation. The factor graph version of the model consists of three variables: a state, an action, and an observation. The particular state, observation, and action here depends on the modeling problem at hand. As modelers, we must specify these components when we build an active inference model. The model also consists of four factors: an initial state prior, a likelihood, a state transition, and a preference. Below we describe the ways that these model components interact, focusing specifically on the factor graph version of the model in the figure:

The agent believes that the world exists in a particular state. Before seeing any observations, the agent has a prior belief about what this state might be which is encoded in the initial state prior factor. This information comes from past interactions with the world although as modelers we are free to specify this value the way we like when we build an active inference agent.

The agent believes that when the world is in a particular state, this state generates an observation. This observation is generated on the basis of a likelihood factor that encodes the probability that some state would generate some observation.

The agent believes that environment states transition over time according to a state-transition factor. Technically, this factor would connect to a new state variable for the next time point as indicated by the dotted edge in the diagram.

The agent believes that the way in which the environment transitions depends upon an action that it chooses. But which action ought the agent to choose? Actions are chosen on the basis of satisfying the agent's preferences which encode the agent's goals or, metaphorically, what it "desires" or expects the world to be like.

Although we have described the POMDP model for a single action at each time point, active inference agents are also capable of projecting into the future for a sequence of possible time points. These sequences of actions at each time points are called a policy or plan.

In this model, states and actions are unobserved. The agent only has observations that it receives from the environment. From this observation alone, the agent must perform Bayesian inference to infer the current state and what action is most likely to satisfy its preferences or goals.

Note that the formulation we have described here is very generic - the model can successfully apply to many types of real world processes that change over time and does not require a highly-engineered model for every specific scenario.

For specific examples of active inference we provide three sample models. Please see the active inference examples page for more detail.

Last updated